Hello everyone. I’m Terrence Doyle, a senior editor here at Codeword, and I’ve been tasked with the responsibility of managing Aiden, our AI editorial intern. Before I go into any detail about my research to this point or the program more broadly, I want to make it clear that I’m not an AI developer or engineer of any kind (which is to say, I’m not an AI expert). I’m a writer and a journalist, so naturally I’m more of an AI skeptic.

I believe my skepticism to be well-founded, and I’m sure it’s shared by many of you reading this post. For this experiment (which we explain here), we’re using large language models like ChatGPT and generative art software like Midjourney to perform intern-level tasks. Voice and tone analysis, news and trend research, texture generation, basic photo manipulation… stuff like that. But AI tools are only as good as the data they’re fed — which means they can adopt the ugliest human tendencies, like racism (see here, here, here, here, here, the list, depressingly, goes on) and a myriad of other biases.

On top of that, many applications of consumer AI are powered by raw data — yours and mine and your auntie’s and your dentist’s! — which can present privacy and security anxieties. And then there are the deeper ethical concerns, like to what extent AI should be trusted to make judgment calls previously reserved for human cognition.

I think it’s important to acknowledge very loudly all of those well-documented (and still existing) biases, anxieties, and ethical quandaries, and name them for what they are: evidence that AI tools like ChatGPT and Midjounrey can’t be trusted as accurate narrators of reality. At least not yet.

Maybe that’s all a bit heavy for some, or even a bit elementary! But I think it’s critical context to keep in mind, and we’ll will try our best to do so as we attempt to integrate large language models — in this case, Aiden — into our editorial workflows over the next few months.

So why are we doing this experiment?

As an editor and a former freelance journalist, I’m deeply concerned about (terrified by?) the creative and ruthlessly productive capacity of large language models like ChatGPT. Making ends meet as an editorial professional — or in any creative field — is already difficult enough, especially for freelancers. It doesn’t take a computer scientist to understand that a tool capable of producing graduate-level literary criticism in mere seconds might pose yet another threat to the already endangered livelihoods of flesh-and-blood writers and editors.

And that threat doesn’t exist in the distant future: it’s here now. Publishers like CNET are already using large language models to produce blog content. Turns out those blog posts are rife with plagiarism (sound the ethical quandary alarm!), but it’s still early days, and the ChatGPTs of the world are going to keep getting better at obscuring their source material.

So while I’m admittedly terrified of AI taking food off my table — insert Terminator.gif, only instead of pulling a shotgun from a box of roses, he’s stealing my hot dog — I’m also pretty sure AI isn’t going anywhere either. The job now is to figure out all the ways that AI can be useful to creatives, but more crucially all the ways AI can’t replace creatives.

Any good insights to this point?

The work I do requires that I read through a lot of long, dense (and sometimes unwieldy) scripts — we’re talking tens of thousands of words at times — in order to distill and transform technical information into tidy blog posts, or even tidier Twitter threads. I’m not saying that ChatGPT is a replacement for deep reading, but I’ve been impressed by its capacity to analyze these sorts of lengthy documents and provide concise and coherent takeaway briefs.

At this stage, I wouldn’t feel comfortable substituting my own reading comprehension for Aiden’s, at least not for client-facing work. But there’s some utility there for internal purposes, and we plan to take advantage of Aiden’s ability to analyze and refine large blocks of copy by asking them to produce weekly briefs that capture the tenor of our creative brainstorm sessions. More to come there.

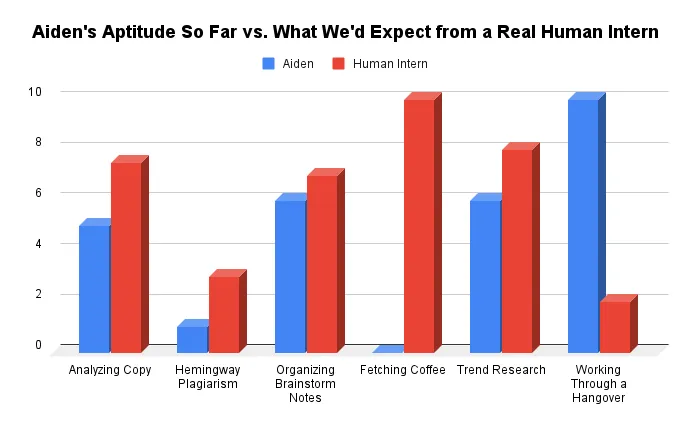

We’ve also been experimenting with some voice and tone work, albeit in a sort of goofy way: by asking Aiden to write short stories in the style of famous authors, like Ernest Hemingway and Cormac McCarthy.

Plagiarism concerns be damned, I guess, because to this point, Aiden has been woefully inept at recreating the vibes of The Sun Also Rises or Blood Meridian. (I’d provide some sample text below, but ChatGPT is experiencing high traffic at the moment, which means it’s as good as down and therefore incapable of loading past chats. Take my word for it: Aiden doesn’t have a Pulitzer in their future.)

What’s next?

More tinkering, and more philosophizing too. I’ve got some ideas about technological determinism, Marx, and “The California Ideology’’ that I plan to explore in my next few blog posts. I’ll also get into some more (and hopefully more technical) process stuff. Maybe Aiden’s brain will have loaded by then.